Architecting Robust Serverless Applications With AWS Lambda

As businesses strive to increase agility and lower operational costs, tech leaders reevaluate their design strategies for serverless systems. One key solution is utilizing AWS Lambda, the foundation of serverless applications on AWS. These functions are stateless and ephemeral and operate on AWS-managed infrastructure, making them versatile for various application workflows. However, this architecture raises questions on how to improve the reliability and decrease latency of serverless applications and maintain a secure platform without the need for complex hardware. This article aims to provide best practices for designing successful serverless applications aligned with the AWS well-architected framework. Join us as we explore these strategies for building robust, efficient, secure serverless systems.

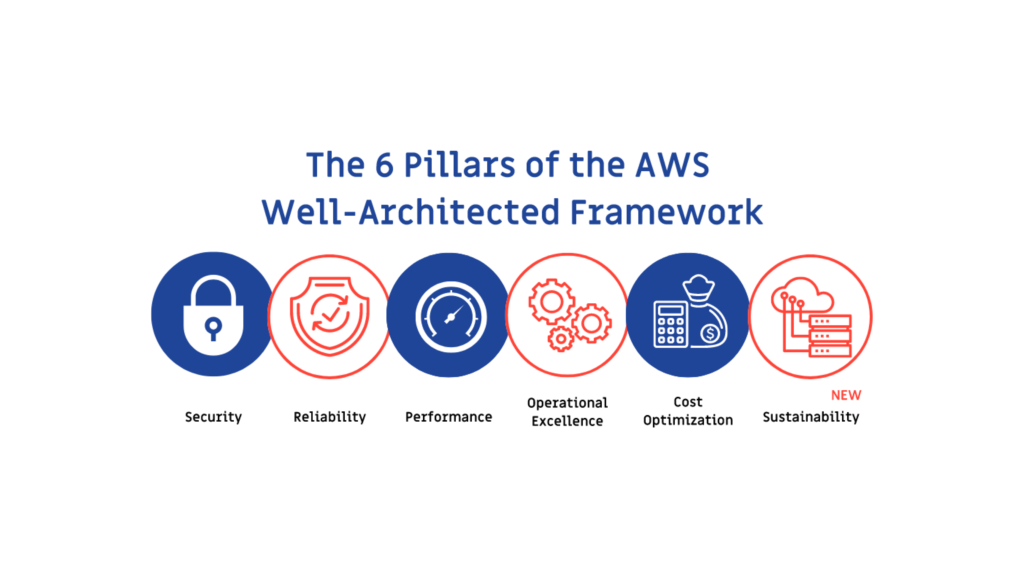

The 6 Pillars Of The AWS Well-Architected Framework

The AWS Well-Architected Framework is a set of principles that focus on six key application aspects that significantly impact businesses. These principles are known as the “6 Pillars” and are as follows:

Operational Excellence: This pillar focuses on effectively supporting the development and running of workloads. It also aims to gain deeper insights into the operations and continuously improve supporting processes to deliver business value.

Security: This pillar focuses on securing and protecting data, systems, and assets. It aims to make systems use the full potential of cloud technologies for improved security.

Reliability: This pillar ensures workloads perform their intended function correctly and consistently when expected.

Performance: This pillar focuses on powering workloads and applications to use computing resources efficiently. It aims to meet system requirements and maintain efficiency at peak levels per the demand.

Cost Optimization: This pillar ensures that the systems deliver business value at the lowest possible price point.

Sustainability: This pillar focuses on addressing your business’s long-term environmental, economic, and societal impacts. It aims to guide companies in designing applications, maximizing resource use, and establishing sustainability goals.

How To Implement These Pillars For Your Serverless Application?

Security

Serverless applications are particularly vulnerable to security issues due to their reliance on Function as a Service (FaaS) and the fact that they often rely on third-party components and libraries connected via networks and events. As a result, attackers can easily damage or infect your application with vulnerable dependencies. However, there are several strategies that organizations can incorporate into their regular routines to address these challenges and secure their serverless applications from potential threats.

- Limit Lambda privileges: By implementing the principle of least privilege for your AWS Lambda functions, you can reduce the risk of over-privileged parts. This principle involves giving IAM roles that provide access only to the services and resources needed to complete a task.

- Use of Virtual Private Clouds: By deploying AWS resources within a customized virtual network, such as Amazon Virtual Private Clouds, you can increase the security of your serverless application. For example, you can configure virtual firewalls with security groups for traffic control to and from relational databases and EC2 instances. Using VPCs can also significantly decrease the number of exploitable entry points and loopholes.

- Understand and determine necessary resource policies: Resource-based policies can be implemented to secure services with limited access. These policies can protect service components by specifying actions and access roles. They can be applied based on various identities such as source IP address, version, or function event source. These policies are assessed at the IAM level before any AWS service implements authentication.

- Use an authentication and authorization mechanism: These techniques can regulate and manage access to specific resources. This is an essential aspect of a well-designed framework for serverless APIs in serverless applications. These security measures confirm a client’s and user’s identity and determine if they have access to a specific resource. This method makes it easy to prevent unauthorized users from interfering with the service.

Operational Excellence

- Use application, business, and operations metrics: By identifying key performance indicators such as business, customer, and operations outcomes, you can quickly gain a higher-level understanding of an application’s performance. For example, an eCommerce business owner may be interested in learning the KPIs related to customer experiences, such as perceived latency, duration of the checkout process, and ease of choosing a payment method. These operational indicators allow you to monitor your application’s operational stability over time and maintain the strength of your app using a variety of operational KPIs such as continuous integration, delivery, feedback time, resolution time, etc.

- Understand, analyze, and alert on metrics provided out of the box: By investigating and analyzing the behaviour of the AWS services your application uses, you can take advantage of the standard metrics provided right out of the box by these services. For example, an airline reservations system that uses AWS Lambda, AWS Step Functions, and Amazon DynamoDB will automatically reveal metrics to Amazon CloudWatch when a customer requests a booking without affecting the application’s performance. These metrics can assist you in tracking the performance of your applications, and you only need to set up your unique metrics.

Reliability

- High availability and managing failures: Systems have a significant chance of experiencing periodic failures, mainly when one server depends on another. Even when systems or services don’t completely fail, they may suffer partial failures. Therefore, applications must be designed to handle such component failures sustainably. The application architecture should be capable of both fault detection and self-healing. For example, AWS Lambda is built to handle faults and is fault tolerant. If the service encounters difficulty calling a particular service or function, it invokes the process in a different Availability Zone.

- Throttling: This method can be used to prevent APIs from receiving an excessive number of requests. For example, Amazon API Gateway throttles call to your API, restricting the number of queries a client can submit within a specific time frame. All clients are subject to these restrictions. API Gateway constrains both the steady-state rate and the burst of requests submitted. By restricting excessive API use, this strategy maintains system performance and minimizes system degradation. For example, in a large-scale, global system with millions of users that receives a significant volume of API calls every second, it’s crucial to handle all these API queries that can cause the systems to lag and perform poorly.

Performance Excellence

- Reduce cold starts: Although less than 0.25 per cent of AWS Lambda requests are cold starts, they can significantly impact application performance. Cold starts can cause code execution to take up to 5 seconds, which is particularly problematic for real-time applications that must operate in milliseconds. Therefore, AWS’s well-architected best practices for performance advise reducing the number of cold starts. This can be achieved by considering factors such as how quickly instances start, which languages and frameworks are used, and functional separation. For instance, programming languages like Go and Python are quicker than Java and C#.

- Integrate with managed services directly over functions: Using native integrations among managed services is beneficial instead of using Lambda functions when no custom logic or data transformation is required. Native integrations help achieve optimal performance with minimal resources to manage. For example, Amazon API Gateway can natively use the AWS integration type to connect to other AWS services. You can use AWS AppSync, VTL, direct integration with Amazon Aurora, and Amazon OpenSearch Service.

Cost Optimization

- Effective strategy: Minimize external calls and function code initialization. Understanding the impact of initializing a function to optimise cost is essential. When a function is called, all its dependencies are also imported. Each library you include slows down your application if it uses multiple libraries. It’s crucial to minimize external calls and remove dependencies wherever feasible. Because it is impossible to avoid them for operations like ML and other complicated functionalities. Recognizing and limiting the resources that your AWS Lambda functions access while in use can directly affect the value offered per invocation. Thus, reducing dependence on other managed services and third-party APIs is critical. Functions can occasionally leverage application dependencies. But it may not be appropriate for temporary environments.

- Optimize code initialization: AWS Lambda’s time to initialize application code is reported by AWS Lambda in Amazon CloudWatch Logs. It would help if you used these metrics to track prices and performance. Because the Lambda functions bill is based on the number of requests made and the amount of time spent. To improve the overall execution time, you can review your application’s code and its dependencies. Additionally, you can make calls to resources outside of the Lambda execution environment and use the responses for subsequent invocations. Another strategy is to employ TTL mechanisms within your function handler code in specific circumstances. This method makes it possible to pre-emptively collect unstable data without making additional external calls, which would add to the execution time.

Sustainability

The sixth pillar of the AWS Well-architected framework is all about sustainability. This pillar evaluates your workload’s design, architecture, and implementation, focusing on reducing energy consumption and improving efficiency.

AWS customers can significantly reduce their energy usage compared to traditional on-premises deployments, thanks to the capabilities offered by AWS. These include higher server utilization, power and cooling efficiency, custom data centre design, and the company’s commitment to powering its operations with 100% renewable energy by 2025.

When designing applications on the cloud, AWS emphasizes certain design principles to achieve sustainability:

- Understanding and measuring business outcomes and their impact on sustainability and setting performance indicators to evaluate improvements.

- Right-sizing each workload to maximize energy efficiency.

- Setting long-term goals for each workload and designing an architecture that reduces impact per unit of work, such as per user or operation.

- Continuously evaluating hardware and software choices for efficiency and designing for flexibility.

- Using shared, managed services reduces the infrastructure needed to sustain a broader range of workloads.

- Reducing the resources or energy needed to use your services and minimizing the need for customers to upgrade their devices.

Six Pillars, One Review!

This blog introduced the best practices for following the AWS Well-Architected Framework for serverless applications and provided examples to make it easy to understand. However, it’s a good idea to connect with experienced AWS professionals when determining if your existing applications and workloads are following the framework.

A well-architected review of your application can provide the following:

- A step-by-step roadmap for optimizing costs.

- Performance.

- Operational excellence.

- Other essential aspects of your business.

WTA Studios, an AWS Advanced Consulting Partner, has helped many customers assess their mission-critical applications for the well-architected framework. Our team of AWS-certified experts specialize in building, assisting, and deploying serverless apps using AWS-recommended best practices. If you’re looking for a similar experience, contact us today!

Manish Surapaneni

A Visionary & Expert in enhancing customer experience design, build solutions, modernize applications and leverage technology with Data Analytics to create real value.

Other articles

Manish Surapaneni

Stop Outsourcing Your Ethics, Tech Leaders

Setting up their own ethics committee is a step forward for tech companies, as they are the clear winners of

Manish Surapaneni

How To Beat The Tech Talent Squeeze In 2023: 6 Proven Strategies

In the current economic climate, with rising costs and increased job turnover, one of the biggest mistakes a company can

Manish Surapaneni

How Augmented Human Intelligence Can Enhance Our Lives With Machines

The advancement of technology has simplified our lives. It has also significantly increased our reliance on electronic devices. MIT Media